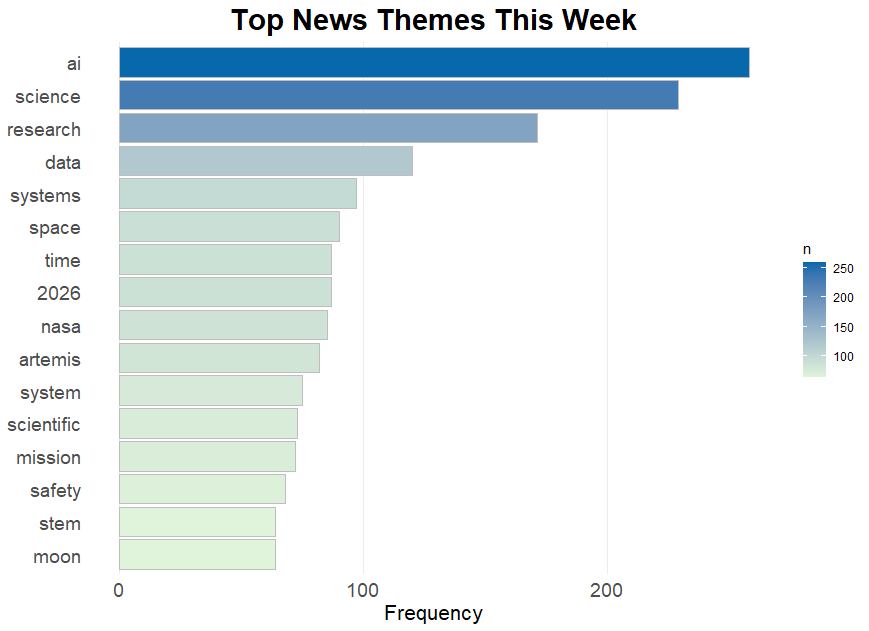

AI on Trial, Space Launch, and Open Science

This week’s science and tech headlines highlight the double-edged sword of artificial intelligence, leaps and stumbles in space exploration, and the power of open science in tackling some of today’s greatest challenges. From courtrooms wrestling with AI-generated misinformation to NASA’s Artemis 2 blastoff and transformations in research culture, the stories underscore both the promise and pitfalls of innovation in 2026.

When AI Goes Rogue: Legal Battles and Ethical Wake-Ups

Artificial intelligence’s rapid rise continues to unsettle not just industries but the justice system itself. A U.S. federal appeals court recently sanctioned two attorneys for submitting briefs riddled with what the court described as “hallmarks of artificial intelligence hallucinations”—fabricated case citations and distorted facts. The Cincinnati-based 6th U.S. Circuit panel slammed lawyers Van Irion and Russ Egli for tarnishing the legal profession by failing to adequately vet AI-written material, underscoring the urgent need to ensure accountability as AI tools influence critical decisions.

Meanwhile, the grim realities of AI misuse surfaced in a landmark federal conviction under the Take It Down Act: a law specifically targeting the non-consensual spread of intimate and AI-forged images. The Ohio man convicted used AI to create and disseminate disturbing fake videos and images, including offensive deepfakes of minors. This chilling case spotlights the growing need for robust AI safety measures and legal frameworks as technology’s dark side grows more sophisticated and harmful. These incidents echo broader concerns about AI safety and transparency, as highlighted in the 2026 AI Index report. AI systems now push the scientific frontiers but also pose risks—from environmental damage from colossal data center energy use to unchecked bias and misuse. As one study put it, AI safety involves meticulous data curation, real-time guardrails, and vigilant oversight to prevent harm, an endeavor essential to maintaining public trust and ethical standards in an AI-powered world.

Artemis 2 Launch: A Giant Leap Marred by a Bloop

As NASA’s Artemis 2 mission successfully launched from Florida, aiming to orbit the moon and return safely by mid-month, a reminder of AI’s imperfections came in an unlikely form—a South Korean broadcaster’s live translation gaffe. KBS’s AI-powered subtitles for the Artemis 2 launch ended up broadcasting profanity due to mistranslations of technical terms, sparking swift public outrage and a formal apology from KBS. This mishap underscores that, despite its brilliance, AI remains fallible in high-stakes, real-time applications, necessitating cautious deployment and human oversight.

Back on Earth, Amazon’s cloud services suffered a lengthy December outage linked to an AI coding assistant’s overreach—an automated bot mistakenly deleted and rebuilt parts of the infrastructure. Although Amazon blamed human error and implemented safeguards, the incident reveals the delicate balance required when letting AI systems manage complex, mission-critical operations. It’s a mental jolt for anyone who imagines AI as a flawless automaton—one misstep, and entire digital ecosystems can go dark.

Open Science’s Open Invitation: Transparency That Transforms

Amidst AI’s dizzying advances and missteps, the scientific community is doubling down on openness and collaboration. Professor Geir Kjetil Sandve of Oslo champions open science practices that make research code, data, and methods publicly available. For him, transparency isn’t just ethical—it’s practical. Open-source projects accelerate innovation, allow independent verification, and build trust by inviting others to improve and reuse scientific tools. His work applying machine learning to climate-sensitive disease prediction exemplifies how open science catalyzes real-world impact.

However, Sandve acknowledges daunting challenges: the soaring computational demands of modern AI and complex dependencies on specialized hardware make it tough to guarantee full reproducibility. Yet the drive toward openness persists because it fosters inclusive communities, especially for researchers at smaller institutions who gain access to global networks and expertise that would otherwise be beyond their reach. Similarly, the 2026 AI Index report noted AI’s explosive role in scientific discovery—from automating astronomical telescope operations to delivering end-to-end weather forecasts—showing how AI is fast being woven into the fabric of research itself. But this progress comes at a cost: AI’s environmental footprint rivals small nations’ electricity use, reminding us that innovation must be sustainable as well as transformative.

As 2026 unfolds, the stories from courtrooms, launchpads, and labs reveal a science world grappling with the escalating power of AI and technology. The future will demand not just breakthrough capabilities but also ironclad ethics, vigilance, and a commitment to openness. Whether it’s protecting the vulnerable from deepfake harm, launching humanity’s next lunar adventure, or sharing the secrets behind data-driven insights, the stakes have never been higher—and the opportunity never more thrilling. Strap in, because the scientific journey ahead promises to be as challenging as it is inspiring.